Data Quality

Data quality (DQ) is a data management capability a company uses to deliver data of the required quality and for an intended use. A company must implement the DQ capability at various organizational levels: strategic, tactical, and operational.

At the strategic level, a company should define its data quality strategy linked to specific business and data management strategies.

At the tactical level, a company should develop a data quality policy and standards, establish processes and roles, and set up a data quality framework. Even if the company starts the data quality initiative as a project or program, it should transform it into “business as usual” operations in the future. It requires the establishment of a business function for the data quality capability.

At the operational level, the company should implement and maintain data quality processes and procedures. DQ professionals need to:

-

Gather information and quality requirements

-

Perform data profiling and analysis

-

Discover, investigate, and resolve DQ issues

-

Design and build data quality checks along data chains

KNOWLEDGE GRAPHS AND DATA QUALITY

WHY USE A KNOWLEDGE GRAPH FOR DATA QUALITY?

Data lineage is one of the knowledge graph use cases. Data lineage uses metadata to describe data movements and transformations at various levels of abstraction along data chains and relationships between data. Data lineage enables data quality (DQ) capabilities by allowing for impact and root-cause analysis. It is near impossible to properly implement the DQ capability without documented horizontal data lineage at the physical level:

-

This type of data lineage allows for root-cause analysis while investigating data quality issues.

For example, assume an issue with calculating revenue for financial reports has been discovered. Financial controllers must know the transformations applied to the original contracts along data chains. Only data lineage at the physical level can assist in identifying potential issues with data calculations and transportation.

-

Data lineage at the physical level will look like a system designed with data quality checks.

Impact analysis should demonstrate the path that a data sourcing element will travel from the point of its origination to the point of its usage across multiple systems. At each step along this way, companies should build various data checks.

WHAT ARE THE KEY BENEFITS OF USING A KNOWLEDGE GRAPH FOR DATA QUALITY OVER EXISTING TECHNOLOGY?

Knowledge graphs use graph database technologies and semantic modeling for more powerful analytics in data processing, data analytics, data visualization, and data modeling. Knowledge graphs have significant advantages over relational databases in their ability to produce powerful data lineage solutions. We will consider the advantages of graph databases in several significant areas:

Data processing

Data lineage documentation deals with large volumes of metadata. Metadata lineage can contain hundreds of thousands of metadata objects, including data quality checks and millions of relationships. These data volumes are examples of limited application landscapes. Relationships between metadata objects are even more important than the objects themselves. Graph databases allow for the effective storage and processing of these relationships.

Data analytics

One of the key data lineage user requirements is the ability to quickly analyze relationships between data attributes at different data points. Doing so expedites the investigation of data quality issues. Graph databases are much more effective in performing queries and handling large volumes than relational databases or other existing technologies.

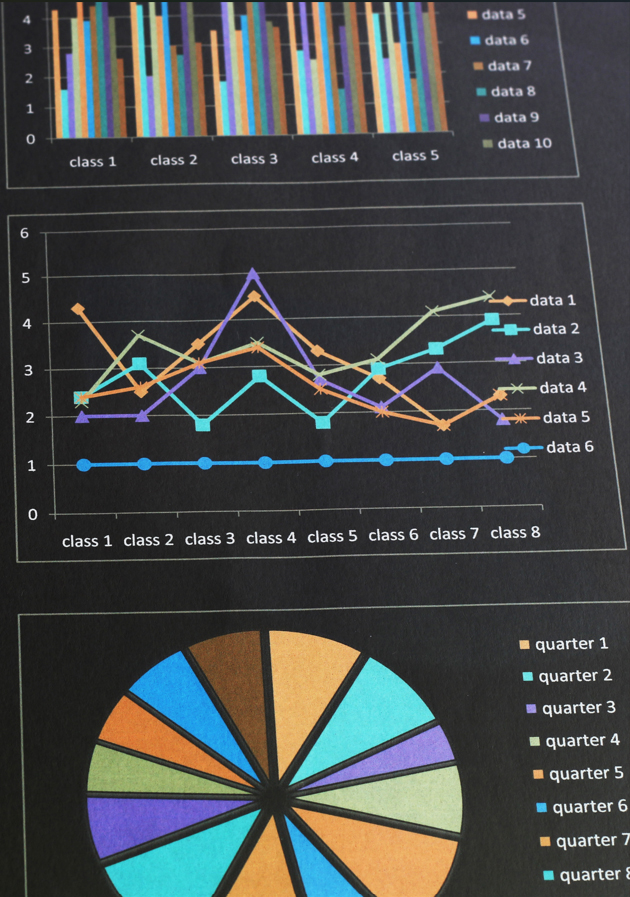

Data visualization

Visualizing data lineage is a mandatory but challenging task. Multiple solutions exist to facilitate the visualization of metadata and relationships stored in graph databases. Knowledge graphs allow companies to visualize the results of data quality measurements at the data attribute level along data chains. In this way, a company is better able to understand how data quality is changing along data chains and better able to demonstrate which data quality rules are applied to which data attributes.

Data modeling

In order to produce integrated data modeling, companies use knowledge graphs with the ability to document and link metadata objects at multiple levels. With knowledge graphs, business professionals can describe their viewpoint using the conceptual and/or semantic models. Data management professionals can then transfer the semantic model into logical and physical solution models. Companies can use knowledge graphs to document data lineage at various levels of their data models.

Documenting

The ability to document and link metadata objects at multiple levels is important for the data quality capability. Business professionals can document their DQ requirements and define business rules using business language at the semantic level. Data engineers will translate these requirements and business rules into physical statements and codes to perform data quality validations. A DQ functionality will apply these business rules to measure the quality of data in each data set uploaded into an IT application.

WHAT SORTS OF COMPANIES NEED DATA QUALITY AND KNOWLEDGE GRAPHS AND WHY?

Often the first step is to start a data quality project as part of a comprehensive data management initiative. An organization could be motivated to improve their data quality for several reasons:

-

Enhance decision-making

We all know the cliché, often used in the context of data quality, “garbage in – garbage out.” In our volatile and rapidly changing business environment, management relies on qualitative and factual information to make important business decisions. Knowledge graphs create additional benefits, such as demonstrating the quality of the information in reports and dashboards that a company’s management team can use for decision-making.

-

Comply with audit and regulatory requirements

All companies deal with regular internal and external audits that require delivery of the qualitative financial information. Knowledge graphs link the requirements of strict regulations imposed upon financial institutions with data quality requirements and then allow for building DQ checks based on these requirements.

-

Improve business efficiency and decrease operational risks

Business professionals in finance and data science need reliable data for their daily analysis. These professionals spend too much time cleansing data, limiting their available time to analyze it. Improved data quality helps increase operational efficiency and reduce operational risks associated with manual data cleansing and processing. Knowledge graphs link semantic and physical layers, bringing forward the reasons for data quality issues experienced by business professionals.

USE CASES

Let’s make a summary of how knowledge graphs can be used for managing data quality:

-

Data lineage

Data lineage assists in performing impact and root-cause analysis. No data quality initiative can succeed without these analyses at the physical level. Knowledge graphs provide technologies that facilitate data lineage outcomes for company use.

-

Linking between data quality metrics and data attributes along data chains

Knowledge graphs enable measurement and visualization of data quality at a data attribute level along data chains.

-

Linking data quality requirements, business rules, and data quality checks

Businesses use knowledge graphs to document their data quality requirements written in business language. Business rules represent these requirements. Knowledge graphs allow for transforming these business rules into software codes to build data quality checks. Knowledge graphs also demonstrate data quality changes along data chains at the data attribute level.

NEXT STEPS

To learn more about how Trigyan can help you with your KYC project, click the button to contact us.